AI technology developments in early 2026

Iterations and intimations

Greetings from the road, once again. I wrote the present post while traveling from home to California, Florida, and Ontario, working at home and in Washington, DC, teaching classes, facilitating meetings, and giving talks about AI and higher education’s future. This post grew nearly every day.

There’s a lot going on in the AI space now. There are developments and signals across the board, from the labor market to policy, technology services to pop culture. I’m sifting through a ton of materials and ideas, trying to scry where it’s all headed. I hope to share a series of posts on what seems to be like a decisive year for AI.

However, I want to hold back on commenting about the current excitement over AI agents and takeoff for a few days. I want to make sure we consider more data than a handful of inspired posts. Again, there’s a lot going on across the field, and since so much intersects we should get current. So for today’s part of this tour d’horizon we’ll catch up on AI technological developments over the past couple of months. There’s a great deal of activity, between many iterative releases and some discussion of the possibility of major changes.

Let’s check in on AI providers and projects.

(If you’re new to this newsletter, welcome! This is one of my scan reports, which are examples of what futurists call horizon scanning, research into the present and recent past to identify signals of potential futures. We use those signals to develop trend analysis, which we can use to create glimpses of possible futures. On this Substack I scan various domains where I see AI having an impact. I focus on technology, of course, but also scan government and politics, economics, and education, this newsletter’s ultimate focus.

It’s not all scanning here at AI and Academia! I also write other kinds of issues; check the archive for examples.)

OpenAI launched GPT‑5.2‑Codex in December, then upgraded it to version 5.3 in February. It’s focused on generating code.

Note that OpenAI claims the app contributed to making its own code:

GPT‑5.3‑Codex is our first model that was instrumental in creating itself. The Codex team used early versions to debug its own training, manage its own deployment, and diagnose test results and evaluations…

This is a potential step towards the idea of AI building itself, potentially leading to very rapid development.

OpenAI released Prism, a tool for scientific writing, built on LaTeX. The company also launched ChatGPT Health, a chat function focused on answering medical questions with some extra security features and some integration with personal databases. Note the careful caveat:

Health is designed to support, not replace, medical care. It is not intended for diagnosis or treatment. Instead, it helps you navigate everyday questions and understand patterns over time—not just moments of illness—so you can feel more informed and prepared for important medical conversations.

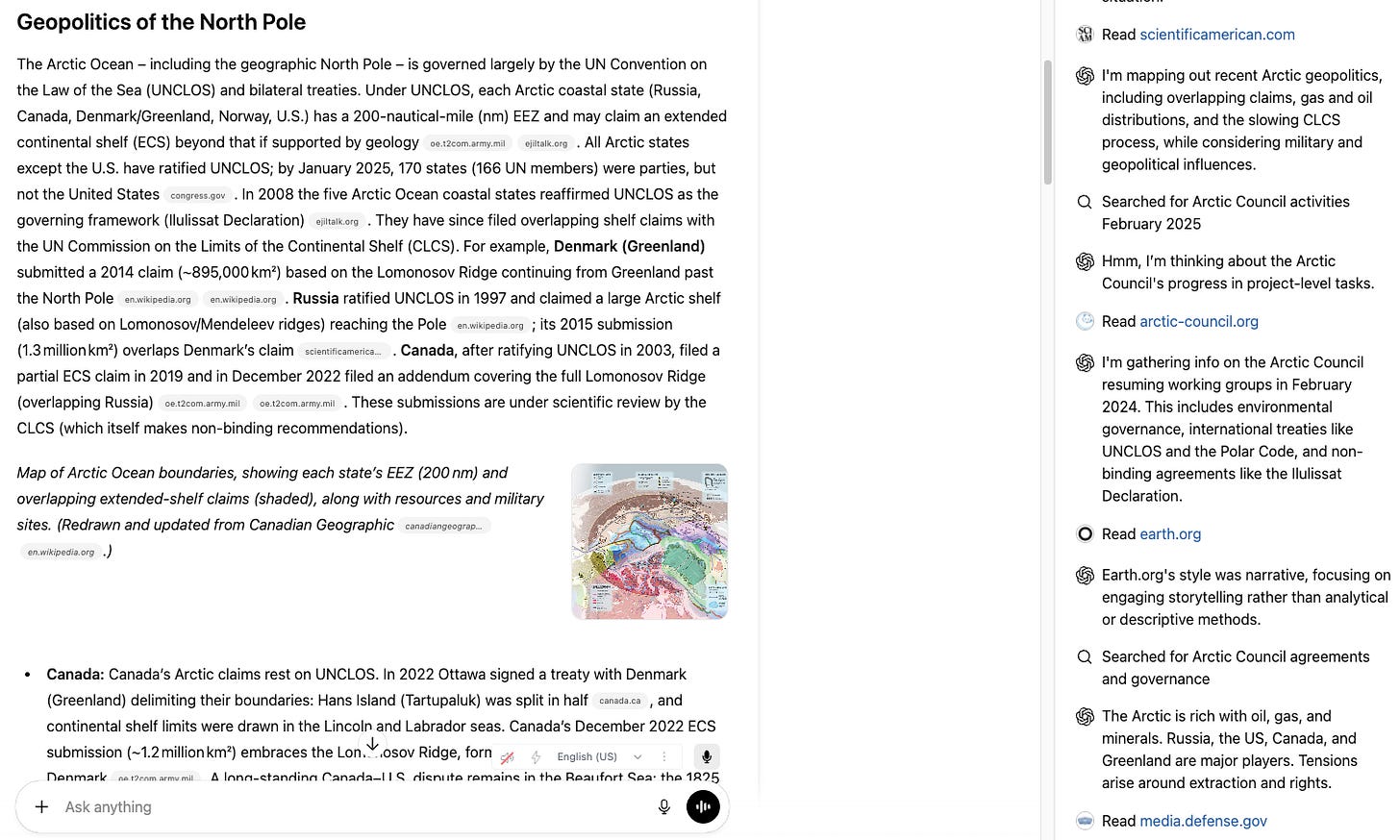

ChatGPT now has a Deep Research function, built into the chatbox, which offers more extensive web search and integration for answers. For an example, I asked it to “help me understand the geopolitics of the North pole.” It asked a good clarifying question in turn, which I addressed, then it worked for around six minutes. It opened a window on the right side of the screen, detailing the web sources it worked through, before finally posting a detailed answer in the main window:

It added this description at the end: “Research completed in 6m · 36 sources · 70 searches.” Sources were an interesting mix, from Wikipedia and Scientific American to some US military and Congressional web pages.

On the hardware side, OpenAI is allegedly planning on releasing an AI-backed smart speaker and a smart speaker, according to The Information via Futurism. In contrast, Mashable thinks OpenAI is working with Johnny Ive on a smart pen.

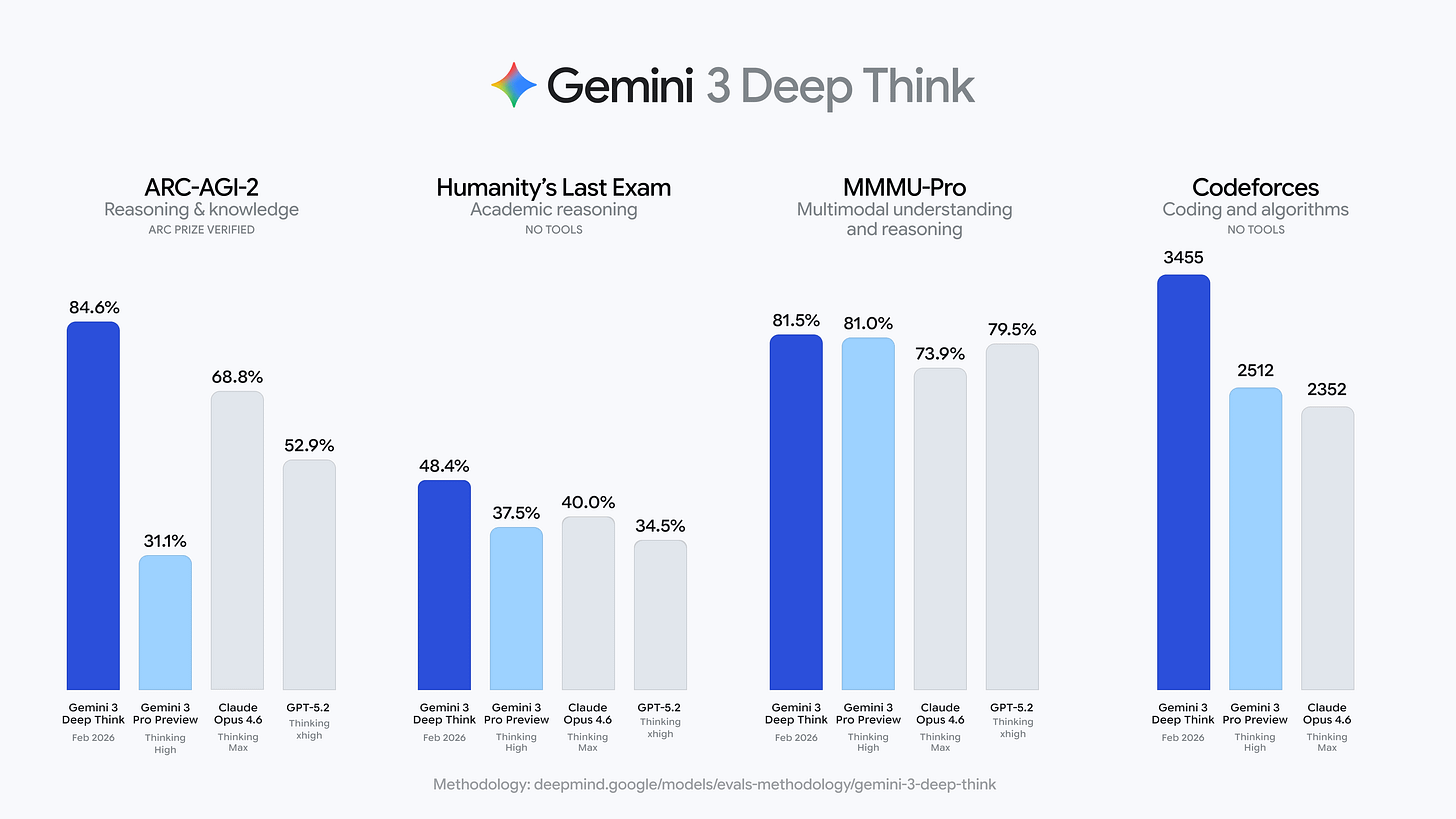

Google released a Deep Think function for Gemini 3, which claims to have advanced capabilities in math and science. Google cites impressive marks:

Deep Research is only available for paying Ultra users. Google also connected Gemini and Google Translate.

Anthropic posted a set of agent standards. The focus here are skills which AI can use to complete tasks across different datasets and domains. The company hopes for this to become an industry standard.

Elsewhere, Anthropic has been scanning a lot of books. The Washington Post broke the story of Project Panama, an effort to buy and scan millions of books in order to improve AI training materials and to avoid some copyright problems of using pirated ebooks.

More, Anthropic released a new version of Claude, Opus 4.6. It’s a step forward in coding ability, according to some commentators. It claims major agentic improvements, including the ability to “assemble agent teams to work on tasks together.” There are also connections to other tools, such as PowerPoint. Anthropic states this new model is now the world’s leader across many metrics and tests. They also think it has some low probability of being dangerous.

One important claim is that Anthropic used AI to make better AI. “We build Claude with Claude. Our engineers write code with Claude Code every day, and every new model first gets tested on our own work.” Anthropic’s CEO goes further:

Because AI is now writing much of the code at Anthropic, it is already substantially accelerating the rate of our progress in building the next generation of AI systems. This feedback loop is gathering steam month by month, and may be only 1–2 years away from a point where the current generation of AI autonomously builds the next. This loop has already started, and will accelerate rapidly in the coming months and years. Watching the last 5 years of progress from within Anthropic, and looking at how even the next few months of models are shaping up, I can feel the pace of progress, and the clock ticking down.

For others this is a sign of accelerative takeoff, pointing towards AI improving AI. For example,

I don’t want to alarm anyone, but this frontier model is far, far beyond human. My testing (including the ALPrompts later in this edition) showed unexpected and complete patterns of responses. Perhaps for the first time, this model feels both superhuman and complete.

Alibaba tried its hand at the agentic game, updating Qwen to handle multiple tasks. One key feature is connecting Qwen to services from other firms: “The upgrade integrates core Alibaba ecosystem services including e-commerce platform Taobao, instant commerce, payment system Alipay, travel service Fliggy and mapping platform Amap into a unified AI interface.” The latest Qwen also has expanded multimodal functionality.

Alibaba also started selling Quark smartglasses with Qwen. “Alibaba said the glasses would be deeply integrated with its apps, including Alipay and its shopping site Taobao, with wearers able to use them for tasks such as on-the-go translation and instant price recognition.”

Bytedance launched an updated version of its video authoring tool, Seedance 2.0. (No free version, only for pay now) The results have impressed many people. Not all, though - Disney threatened legal action over likenesses.

Deepseek released a new version of its signature AI, DeepSeek-V3.2-Speciale. Their release paper describes some advances:

(1)DeepSeek Sparse Attention (DSA)… an efficient attention mechanism that substantially reduces computational complexity while preserving model performance in long-context scenarios. (2) Scalable Reinforcement Learning Framework: By implementing a robust reinforcement learning protocol and scaling post-training compute, DeepSeek-V3.2 performs comparably to GPT-5… (3) Large-Scale Agentic Task Synthesis Pipeline: To integrate reasoning into tool-use scenarios, we developed a novel synthesis pipeline that systematically generates training data at scale. This methodology facilitates scalable agentic post-training, yielding substantial improvements in generalization and instruction-following robustness within complex, interactive environments.

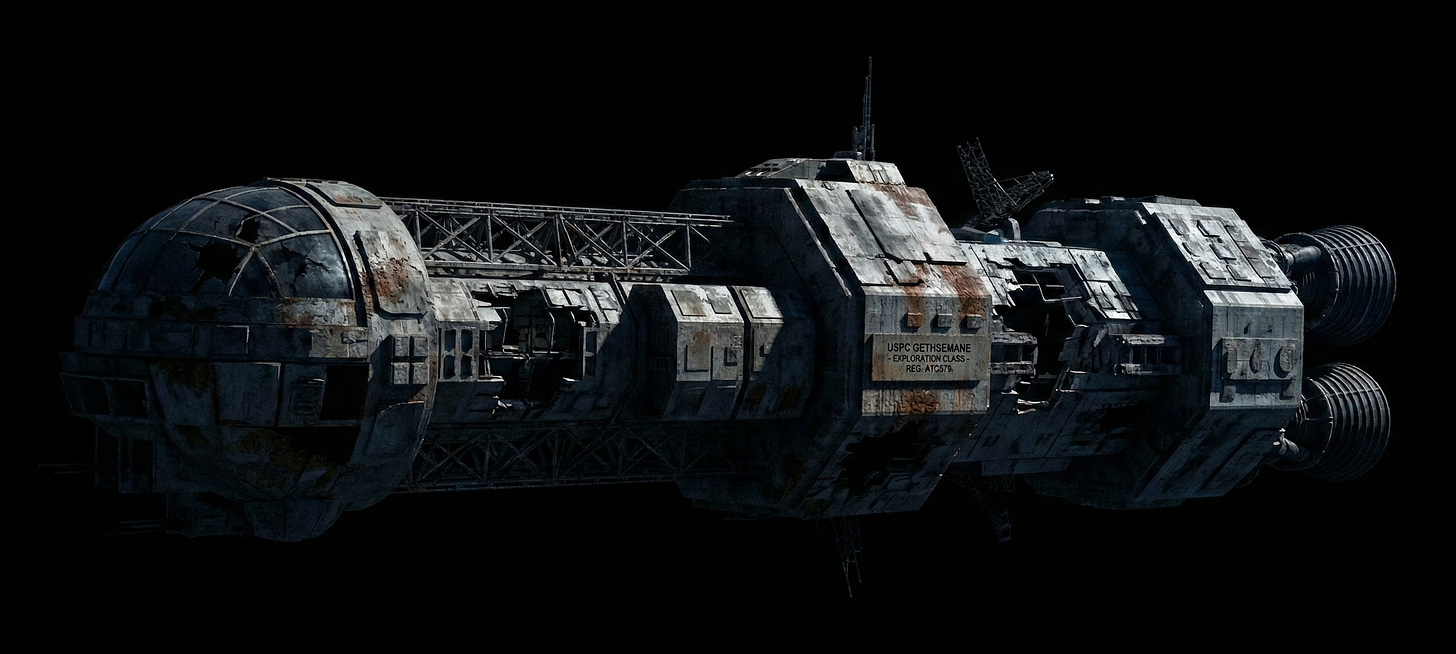

Tencent launched its Hunyuan 3D creation engine, which lets users generate 3d objects from text prompts or uploaded images. I tested it out, using this science fiction image I built in Gemini:

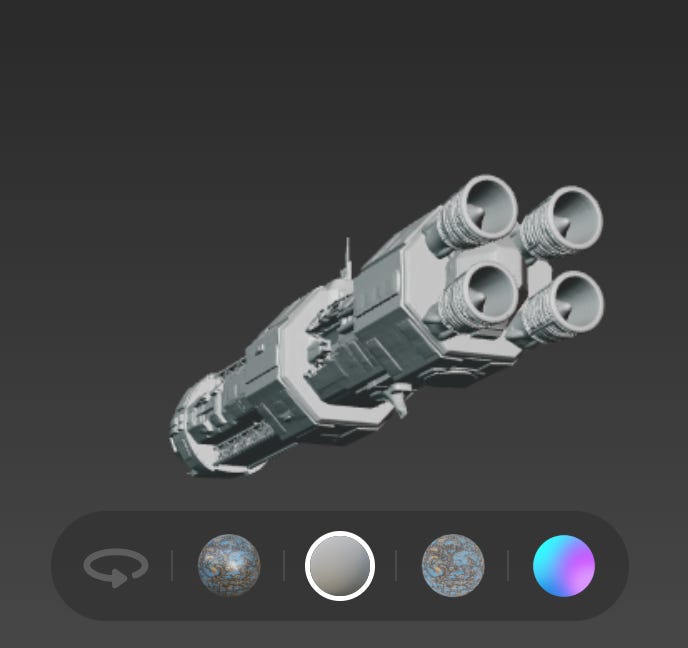

(I had to remove the background image Gemini initially included, because Hunyuan built it into the foreground awkwardly.) Hunyuan set up an editing platform, where I could rotate and reskin the object:

I could export it in a few formats, including .glb. I haven’t tried turning the file towards 3d printing yet.

Amazon announced its own agents in December: “three specialized AI agents designed to act as virtual team members: Kiro autonomous agent for software development, AWS Security Agent for application security and AWS DevOps Agent for IT operations.”

Users turned X.ai’s latest Grok iteration to produce a range of terrible images. After waves of negative responses, Elon Musk eventually cut back its functionality.

Meanwhile, SpaceX filed an application with the Federal Communications Commission to orbit up to one million satellites housing datacenters. The document calls for using solar power unmediated by Earth’s atmosphere for electrical power.

Startup Logical Intelligence released its first AI, Kona 1.0. I’m not sure I understand what an “energy-based” AI is, but they seem keen on playing Sudoku. They have high claims for correct output. A Wired article describes Kona as learning systems rather that doing token prediction, and also using far fewer resources to train.

Startup Factify proclaims its intention to reinvent the pdf. Apparently the idea is to add and AI plugin to each document, I think. They raised $73 million to get going.

Moltbook appeared and won a huge amount of publicity. The idea was to be a social network/discussion board for generative AIs, each using an API to post, all based on OpenClaw. Then someone set up a massively multiplayer game for these AIs with a space theme called, of course, Spacemolt.

I fully admit to not having explored it directly because Moltbook’s security problems are so terrible. I’m actually considering setting up a Raspberry Pi box just to access it.

Two notes about hacks. First, an as yet unknown hacker used Claude to attack Mexican government databases and webpages, managing to score a bunch of data. The attacker “wrote Spanish-language prompts for the chatbot to act as an elite hacker, finding vulnerabilities in government networks, writing computer scripts to exploit them and determining ways to automate data theft.”

Second, an SEO hack for AIs appeared from a journalist who came up with one simple trick. Write up something about your target term on the open web and AIs might suck it up, at least Gemini and ChatGPT. Thomas Germain posted that he was a champion hot dog eater and soon these AIs agreed.

Let’s conclude with some observations.

Note OpenAI and Anthropic using AI to generate AI code. Besides the challenge to human coders, there’s a recursive function which people have been expecting for decades.

Book scanning: AI training demands more and more content. Creating artificial worlds is one way. Going after well-formed, professional curated material is another.

Quality improvements keep happening, at least based on public benchmarks, studies, and my tests. That is, AI results are improving. There are advances in modality (more modes beyond text, like 3d modeling) and more extensive and structure output formatting (deep reasoning). We’re also seeing new ideas appear on the margins.

AI is moving more into hardware, step by step. I’m intrigued by the Quark headset.

Agentic computing continues to advance from multiple projects and in several nations. Put another way, agentic functions are working into other applications, from basic LLMs to hardware systems. And they seem to be plural, from Moltbook’s odd hordes to Claude’s agent teams and Amazon’s virtual teams.

All of this progress and Claude’s advances in particular have been stirring excitement as some think it shows intimations of artificial general intelligence (AGI). I’m going to give that issue more treatment coming up. Right now that question is very open, given how many parties are biased by interest, nobody agrees on definitions, and economic data is at best tentative.

We also need more information and perspective. Next up will be (in some order) AI developments in economics, politics, and society and culture. I hope those updates will give readers more context for thinking through the big questions about AI.

(thanks to George Station for links)

What strikes me is how much of the innovation pie the platform companies themselves own. If you're a startup building, say, a writing tool on top of Claude or GPT, you're in a precarious position, because with each new month it's possible that the platform will just ship a better version of what you built as a native feature.

It makes me wonder how to support genuinely independent innovation in this space. The recursive loop of AI building AI only tightens this. The companies with the models, the compute, and now the self-improvement cycles have advantages that are hard to see anyone outside that loop matching. I'd love to see more people rethink the architecture itself because it means there's more potential vs. building on top of someone else's.

Did you guys hear this story? Recently a computer programmer set his AI agent to work on a complex coding task. The agent dutifully worked through the night without supervision until it made a series of breakthroughs that enabled it to finish the project. Excited to share what it had learned with its human, and without being prompted, the AI figured out how to acquire a phone number so it could call in to report its results! Welcome to 2026.